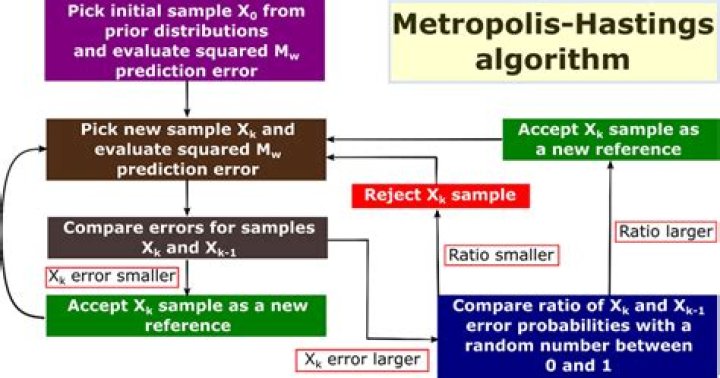

How does the Metropolis-Hastings algorithm work?

How does the Metropolis-Hastings algorithm work?

The Metropolis Hastings algorithm is a beautifully simple algorithm for producing samples from distributions that may otherwise be difficult to sample from. The MH algorithm works by simulating a Markov Chain, whose stationary distribution is π.

What is Metropolis Hastings sampling?

In statistics and statistical physics, the Metropolis–Hastings algorithm is a Markov chain Monte Carlo (MCMC) method for obtaining a sequence of random samples from a probability distribution from which direct sampling is difficult.

Which of the following is a requirement of the simple Metropolis algorithm?

Which of the following is a requirement of the simple Metropolis algorithm? The parameters must be discrete.

What is acceptance rate MCMC?

Lastly, we can see that the acceptance rate is 99%. Overall, if you see something like this, the first step is to increase the jump proposal size.

What is Gibbs sampling used for?

Gibbs sampling is commonly used for statistical inference (e.g. determining the best value of a parameter, such as determining the number of people likely to shop at a particular store on a given day, the candidate a voter will most likely vote for, etc.).

What is burn in in MCMC?

Burn-in is a colloquial term that describes the practice of throwing away some iterations at the beginning of an MCMC run.

Which sort of parameters can Hamiltonian Monte Carlo not handle?

# Hamiltonian Monte Carlo can not handle discrete parameter. # Because it cannot glide through discrete parameters without slopes.

What is a good acceptance rate for Metropolis-Hastings?

0.234

Recent optimal scaling theory has produced a condition for the asymptotically optimal acceptance rate of Metropolis algorithms to be the well-known 0.234 when applied to certain multi-dimensional target distributions.

How does likelihood weighting work?

Likelihood weighting is a form of importance sampling where the variables are sampled in the order defined by a belief network, and evidence is used to update the weights. The weights reflect the probability that a sample would not be rejected.

How does Gibbs sampling work?

The Gibbs Sampling is a Monte Carlo Markov Chain method that iteratively draws an instance from the distribution of each variable, conditional on the current values of the other variables in order to estimate complex joint distributions. In contrast to the Metropolis-Hastings algorithm, we always accept the proposal.

Is Gibbs sampling Metropolis Hastings?

Gibbs sampling, in its basic incarnation, is a special case of the Metropolis–Hastings algorithm. The point of Gibbs sampling is that given a multivariate distribution it is simpler to sample from a conditional distribution than to marginalize by integrating over a joint distribution.